I started to try to explain how AI coding is changing traditional software. Not software development, but software in general. The best I could come up with on a Friday afternoon was the pottery example - to the general user, software was like pottery. Someone crafts an application like a pot.. it has a size and shape and is fit for purpose (mostly) and it gets fired.. set in place.. unchangable by the user. They buy different building blocks of software that usually fit together pretty well, but they are unchangeable. Suggestions to companies to include features often get filed directly into the trash bin. Features and updates are top down and rarely in actual response to customer feedback.

Open source is a bit different.. you deploy the software but if you have the skill you can modify it or you can convince the community supporting the project to incorporate features. This is like a dry clay pot that hasn’t been fired yet. You can wet it down and rework it into a new shape and let it dry again. It’s more malleable, but only by so much. New features often must be crafted around what makes sense for the project as a whole. Projects won’t generally merge code updates that serve a very specific niche, especially if those changes break functionality elsewhere or require specific operating environments to work properly that many users won’t likely have. Truly customized features maintain offline in forks that will never be part of a pull request.

I am finding that I can pretty much take any open source project and modify it to work for exactly my needs. In theory I could also decompile other software and fairly easily modify it with AI tools that can follow the code with little need for variable names and debugging symbols. Software in this context feels completely malleable, like wet clay. Changes made are excruciatingly personalized to the point I know a PR would make project developers fold themselves in half. The code becomes assimilated into my personal hoard of increasingly esoteric tool sets.

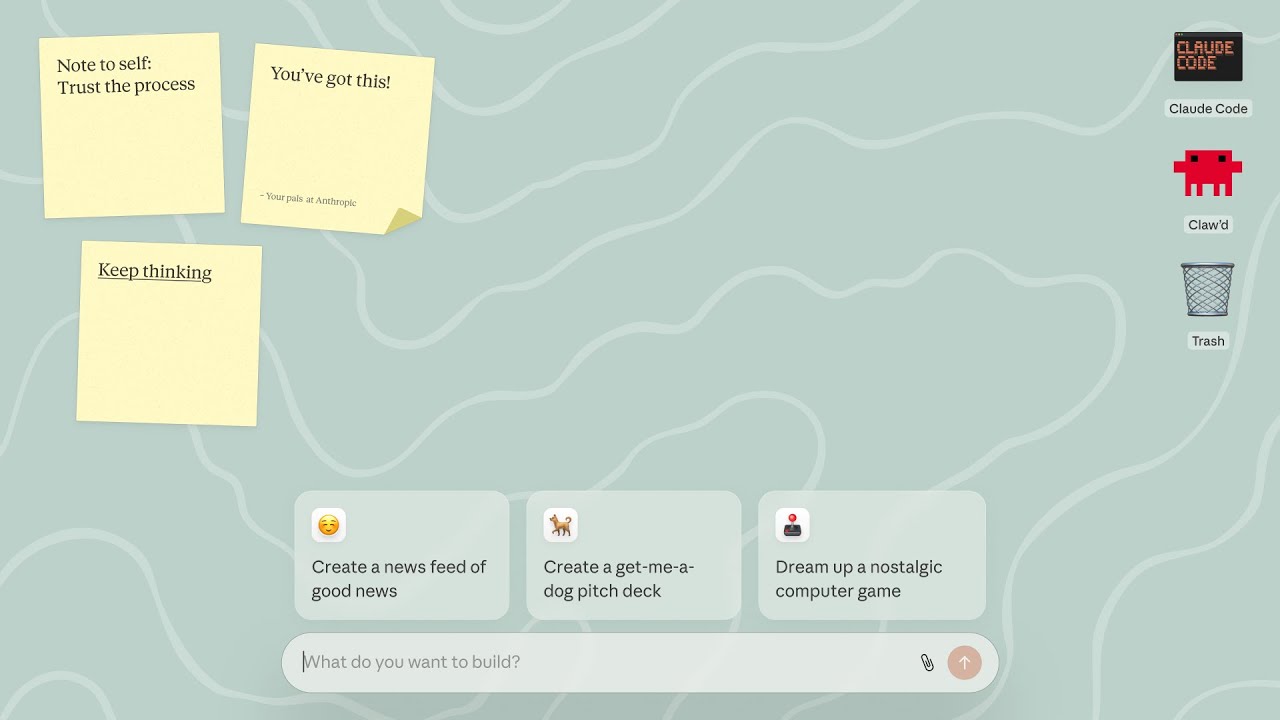

I am trying openclaw.. i have a nextcloud instance so i wanted to chat with openclaw via Talk, the nextcloud messaging system. There is a plugin built into the openclaw project, but it’s broken for my needs. I wanted more things like “typing…” notifications when the LLM is generating a response.. which are not available as a nextcloud bot API implementation. So I created an actual user account for openclaw and implemented (with claude code) user API integrations to create this little feature. My AI developed code changes are not suitable for a PR without extra considerations like falling back properly to bot-only operating if no user account existed.. or creating a configuration method to allow people to provide the user name and password to use for user API access.. these things are simply hard coded into my deployment because those considerations are not necessary for ME.. as they might be for “the community”.

But now I’m at this point where I ask myself - what is software now? If anyone can fairly easily customize software or build software from scratch to suit their exact needs, what is the future of open source development at all? What is the future of software development?

When I first heard of Claude Imagine, I was dubious.. but it’s clear to me now they also grappled with this same question.

Claude Imagine doesn’t build “software” it creates it on the fly in response to user input and use. It codes on the fly as you interact with the application. This might still be a laughable concept to some as of today, but consider the progress made in the last 12 months. Considering AI is advancing much faster than Moore’s law for ICs / CPUs.. and exponentially vs linearly.. I believe very soon (18-36 months) this will have a serious impact on just what a computing device is and what a computing device does (how it even works).

In short, I’m finding my very will to contribute changes to open source projects is receding because I can get the functionality I need in very short order, but it’s not suitable to push back to the community project.. and to take the time to make it suitable is at odds with the new paradigm. I find now with the tools at hand, time itself has become much more valuable, while wasting time doing the wrong things is exponentially more costly. The personal “effort economic” conditions these tools create is likely to have a detrimental effect to contributions to open source projects. I just hope the capabilities these tools bring to serious FOSS developers who believe in the premise above the utility of the software itself will continue to tip the balance in favor of projects being accelerated vs declining.

Just my 2 cents after 1.5 days using OpenClaw.